Human-Robot Interaction

Real-time Perception & Behaviour Selection

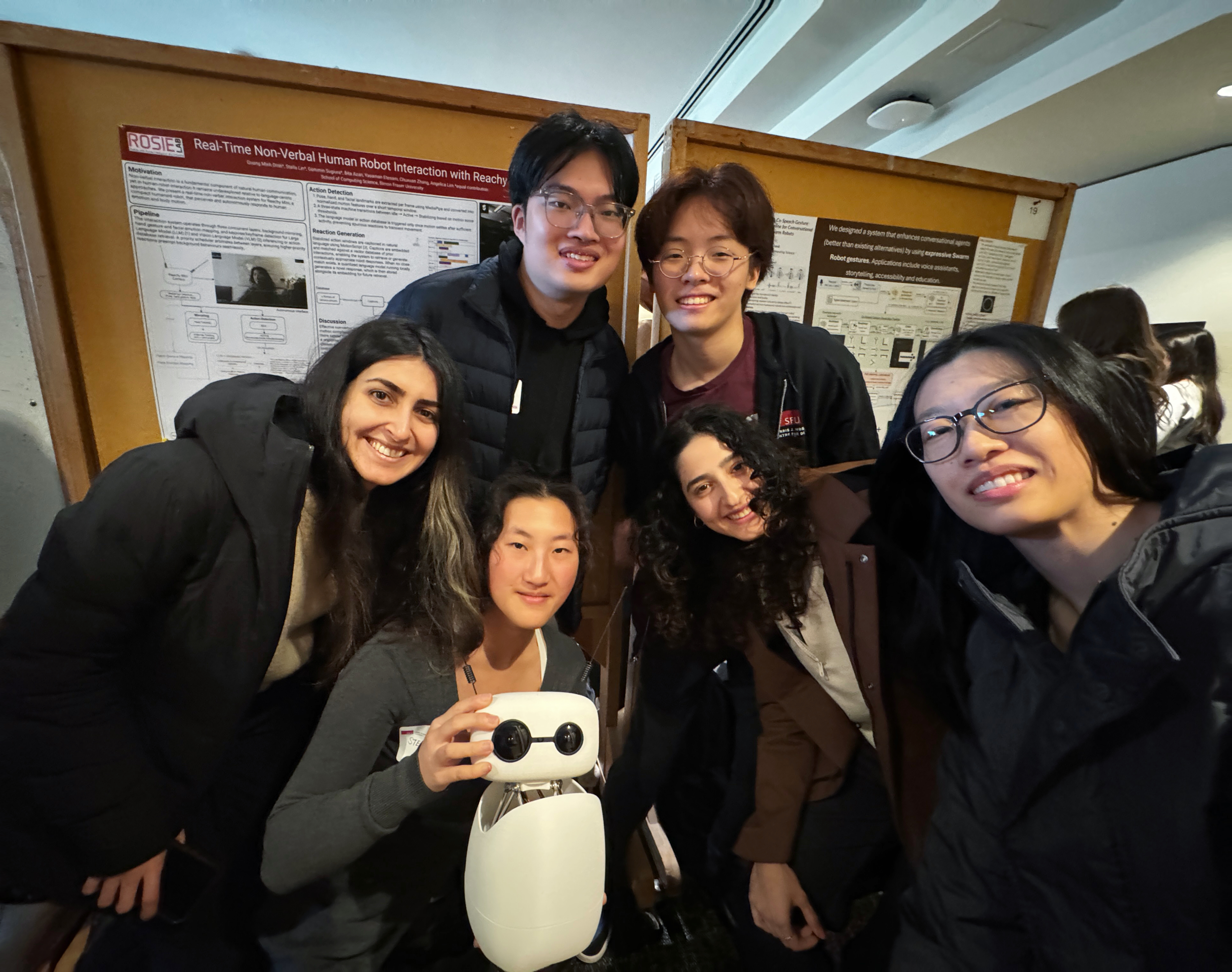

I'm the red-haired person in the photos. I built a web-based teleoperation system for the Reachy Mini robot, which served as the human-controlled baseline for our Turing-style evaluation. I also integrated MediaPipe with a real-time emotion recognition model for facial and gesture perception, and deployed a local vision-language model (Qwen2.5-0.5B-Q4_K_M) to select among 81 pre-recorded expressive behaviors, achieving fully offline operation on consumer hardware with ~3s end-to-end latency. In user studies, participants were only 38% accurate at distinguishing autonomous from human control, below the 50% chance baseline. Thank you to Dr. Angelica Lim for supervising this project. Project website →